Microbiomes, big data, the Higgs boson, and brain-computer interface – these are only a few of the discoveries that made their mark in a decade of scientific revolutions which will – and are already – changing our lives

The future is here: Quantum computers

The first decade of the 21st century ended with the demonstration of what was called the “first complete quantum computer,” which uses quantum memory units, “qubits.” In contrast to conventional computers, where a bit can be either 0 or 1 in value, the qubit can hold them both simultaneously, or combinations thereof. This can greatly increase the computing capability of a quantum computer. The quantum computer unveiled in 2009 was a demonstration of capability, but obtaining significant computing capacity necessitated increasing the number of qubits – the quantum computer’s basic information units – and curtailing the errors common in quantum components. Extensive research has been conducted over the past decade about the various realization possibilities for quantum computers, especially based on circuits composed of superconducting materials.

The competition to be first in this field is fierce and tech giants like Google, IBM, and Intel are investing huge resources in it. Throughout the years, quantum computers with a growing number of components were launched; since 2018, Google holds the record, with its 72-qubit processor, while IBM’s 53-qubit processor was made accessible for researchers to use through the cloud. Beyond the number of components, their accuracy is also key, and considerable resources have been invested on this front. In 2019, IBM presented the first commercial quantum computer, packed in a glass cube the size of a room. Today, researchers and developers can tap into quantum computing capabilities through the internet, a service provided by various companies.

The decade concluded with another milestone: In October 2019, Google announced it had achieved “quantum supremacy” – the power to solve a problem that even the world’s fastest supercomputers cannot solve in a reasonable amount of time. It was a significant accomplishment, and some see it as the beginning of the age of quantum computers, just like the Wright brothers’ first flight in 1903 marked the beginning of the powered aircraft era. However, the statement has been widely challenged, including by their rivals at IBM, since supremacy was attained through a problem chosen specifically for that purpose. Still, it’s an important step on the way to unimaginable computing capabilities, and the future of this field looks more promising than ever.

Dr. Dan Yudilevich

Massive investments by tech giants: Quantum computing. Illustration: Science Photo Library

Breakthrough in genetic engineering

The genetic revolution, which began with the discovery of the DNA’s structure and continued to the cracking of the genetic code and developing advanced methods to read its sequence, has taken a giant stride towards an even deeper understanding of life and the genetic mechanism controlling it. The past decade introduced the gene editing revolution: For years, we could effectively read the genetic code, but editing it, inserting changes into its sequence in order to repair mutations, and silencing a specific gene or activating another were difficult and inaccurate at times. CRISPR technology, based on a set of natural defense proteins in bacteria, enabled scientists to efficiently and accurately change entire DNA sections of their choice at low costs.

The CRISPR revolution doesn’t stop in the world of genetic engineering. In medicine, for example, researchers are developing CRISPR-based methods for disease treatment by applying direct intervention in the patient’s genome, including genetic diseases such as sickle cell anemia or type 1 diabetes, and cancer, infectious diseases, or even obesity. CRISPR is also used to develop moths which make silk with spider web proteins, in order to control mosquito populations. And the list goes on. In the food industry, genetically engineered salmon (not made with CRISPR) was the first genetically engineered animal that received FDA approval. At the end of 2018, a scientist in Chian announced that he had edited the DNA of two babies, leading to a media frenzy and demands for tighter supervision of the field. It’s still early to tell where the genetic engineering revolution will take us, but life is a jungle, so at least we have a tool to get us through it.

Ido Kipper

Video of John Oliver talking on engineering:

The computers that will replace us: Artificial intelligence and Big Data

Artificial intelligence, a field that develops software enabling computer systems to adopt “human-like” behavior or make decisions independently, for instance, through an independent learning process called “deep learning” or simulations of artificial neural networks, has undergone rapid development over the past decade.

Scientists have developed precise models for forecasting the weather and computers that can infer their location based on sight, create selective hearing aids, and even read people’s thoughts. Using large databases, computers can create “fake news,” impersonate real people, and create pictures of people who never existed. Computers have even beaten humans at complex games, like chess, Go, or even multiplayer poker.

In robotics, newer and more accurate sensors, together with machine learning, have given rise to the development of robots that can navigate themselves in unfamiliar surroundings or identify dangerous situations, using an artificial nervous system.

Learning systems are an important tool in mining data from the vast volumes of data we create every second: From photos and text messages, through location and medical details, to information on our shopping or when our printer needs ink. In 2010, we produced 1.2 zettabytes (one sextillion or 1021 bytes) of data. In 2019, we produced 4.4 zettabytes and that rate is expected to continue increasing at breakneck speed.

The past ten years were the breakthrough decade for big data: Systems that attempt to identify significant patterns in large data banks, using advanced algorithms or deep learning and technologies that enable gathering, sharing, scanning, and storing vast amounts of data. Scientists have started to use these systems to improve medical diagnosis, forecast disease outbreaks, discover new particles, and of course, in commercial use. In the next decade, big data analysis will gradually take on a growing part in our life, and, just like any other technology, there may be those who will put it to misuse.

Amit Shraga

The rate of data production will continue to grow rapidly and with it, the methods for processing it. Big data. Photo: Shutterstock

The rise of immunotherapy: The immune system against cancer

Harnessing the immune system to fight cancer has been one of the most notable trends in the life sciences in the past decade. In the 19th century, some doctors believed there was a close connection between cancer and the immune system’s cells. In the second half of the 20th century, researchers understood that one of the roles of the immune system is to destroy cancer cells using mechanisms similar to those targeting viral infections and that some of the cancer cells fly under the immune system’s radar – thus enhancing their survival. Nonetheless, research in the field made no headway, and most of the progress in cancer research and medicine addressed cancer cells’ accelerated multiplication rate. Until the past decade, most medications against solid tumor extended patients’ lives by a few months, and were characterized by the tumors’ fast regression and subsequent return in a much more aggressive form.

Impressive results were obtained with medication targeting cancer cell receptors, which helps the cancer cells to evade the immune system, at the beginning of the decade. For the first time, patients’ lives were lengthened by five years or more. In parallel, a technology called CAR-T was developed, whereby part of the patients’ immune system cells are reengineered to attack the cancer cells more effectively. The method was developed in Israel and the technology shows promising results. Immunotherapy’s founding fathers, James P. Allison from the U.S. and Tasuku Honjo from Japan, shared the 2018 Nobel Prize in Physiology or Medicine . This great progress led to hundreds of clinical trials, some of which are in advanced stages.

Dr. Yochai Wolf

How does the immune system fight cancer? A video by Nature explains:

The world is on fire: Earth continues to heat up fast

Global warming didn’t transpire at the same rate throughout the 20th century. From the 1940s until the end of the 1960s, it paused, and even a cooling down began, but subsequently, the trend was reversed and warming ensued at a fast pace. The first decade of the 21st century gave new hope, because warming slowed down considerably, almost stopping altogether. However, that break ended in 2014, and since then, the warming rate has been very fast. Those two breaks are sometimes used as evidence against the connection between human activity and global warming; if the amount of greenhouse gases in the atmosphere continues to rise steadily, how is it that the global temperature doesn’t rise as well?

The explanation can be found in the Earth’s natural climate cycles, which are constantly changing because of changes in ocean currents, volcanic activity, and other factors. Adding the warming due to the rise in greenhouse gases to the natural cycles leads to times when there is no warming. In fact, if the concentration of greenhouse gases didn’t rise when it did, we would probably have seen a significant cooling down and not just a pause in global warming.

The second decade of the 21st century signals the abandoning of the belief that humans are not a major factor in global warming. The years 2015-2018 were the warmest on record since the industrial revolution (in comparison to the 1951-1980 temperature average). The latest report from the Intergovernmental Panel on Climate Change (IPCC) from 2018 found that at the current warming rate, there will be a rise of 1.5 degrees Celsius, in relation to the pre-industrial era, sometime between 2030 and 2052. In order to stop there and not go over it, we need to reach zero emissions of greenhouse gases by 2055. In essence, if greenhouse gas emissions continue undisturbed, the scenario of a 1.5-degree increase will vanish, if we don’t take immediate action to reduce it.

Jonathan Group

We’ve stopped believing that man isn’t responsible for global warming. Illustration: Shutterstock

Our subtenants: How does the microbiome affect our lives?

Genetic sequencing technologies have led to another revolution – the understanding that the “guests” that live in our body affect almost every aspect of our lives. There are at least 5,000 types of bacteria in our body, and an unspecified number of fungi, amoebas, viruses, worms and others. The large majority of these live inside of us naturally and do not cause disease, but they do influence appetite, weight gain, how diet drinks affect us, milk digestion, development and diagnosis of cancer tumors, the efficacy of medicines, pregnancy and child health, inflammations and many other processes inside our body, in both health and disease. In turn, many things influence our bacteria and change them, from antibiotics to space flight. In addition to research about our microbiomes, i.e., all the bacteria that live on and inside us, there are also studies that collect information on other microbiomes – from cattle and squid digestive systems to samples from the ground and sea, the castle of Loki in Sweden, and many more.

Dr. Gal Haimovich

Life inside us: a video on the microbes inside us and their effect

A new look at the universe: gravitational waves and neutrinos

In 1916, Albert Einstein published his theory of general relativity, which describes the behavior of masses, gravitational forces, and the dynamics of space and time themselves. One of the more surprising forecasts was the existence of gravitational waves – ripples in the fabric of space-time, generated by accelerated large masses. When he came up with the theory, Einstein thought it would never be possible to measure these waves because their impact on our space is smaller than the movement of a single atom. Proof came almost 100 years later: In 2015, gravitational waves, generated by the collision of two black holes in a remote galaxy, were measured for the first time. The discovery was awarded the 2017 Nobel Prize in Physics.

How is such a weak phenomenon measured? The LIGO (short for Laser Interferometer Gravitational-wave Observatory) detector in the U.S. is an interferometer with two perpendicular arms, each four kilometers long. A laser beam split between the two travels back and forth hundreds of times between mirrors stationed along the arms, eventually recombining. A passing gravitational wave will slightly stretch one arm as it shortens the other, affecting one of the laser beams' path and causing it to fall out of step with the other. This gap can be measured with precise optical equipment. LIGO consists of two identical detectors located thousands of miles apart in the U.S., and one in Europe. The identification of a gravitational wave in all three detectors enables physicists to calculate the direction and distance from the source of the waves.

Since the detectors started operating, they have identified about 20 black hole collisions and at least one neutrino star collision, which was also picked up by optic and radio telescopes. A huge gravitational wave detector is planned in the next phase, which will measure laser beams between satellites and will be sensitive enough to pick up gravitational waves that cannot be identified by a “small” land detector, just a few kilometers long.

In the past decade, another type of exotic astronomy joined gravitational wave detectors: neutrino detectors. Neutrinos are evasive particles, which hardly interact with anything and are thus quite difficult to detect. The construction of IceCube, a neutrino observatory made of sensors embedded deep in the Antarctic ice, was completed in 2010, and in 2013, its first results were published. Measuring the flow of particles and identifying their source help develop or refute models of energetic explosions and the creation of cosmic beams.

Using gravitational waves and neutrino particles to identify astronomical phenomena significantly expands astronomers’ tool box, which until today, was limited to measuring electromagnetic radiation, from visible light to radio waves and x-rays. These new tools enable the gathering of new data on the most exotic and energetic phenomena in the universe.

Guy Nir

Video by Veritasium explaining the discovery of gravitational waves

Brain-machine interfaces lead to new treatments

A brain-machine interface or brain-machine computer is an external device that “communicates” directly with the brain. In the past decade, great progress was made with interfaces that learn brain patterns during movement or movement planning, towards sending signals to the limbs of patients with spinal cord injury, thereby bypassing the damaged spinal cord used as a relay station between the brain and limb muscles.

For instance, brain-machine interfaces learned brain patterns through fMRI and succeeded in restoring movement to patients with spinal cord injury. In 2016, devices that transfer electric signals from the brain helped paralyzed patients move using the “power of thought” and devices that transfer the information to limbs helped paralyzed patients rise from their wheelchairs and walk. Another use for the device is reconstructing the sense of touch in amputees who use prosthetic limbs which do not deliver somatosensory information, which, in healthy people, provides important feedback for the area in the brain which is responsible for executing movement. In 2019, researchers reported the reconstruction of the sense of touch in a prosthetic leg and hand.

What other uses can these interfaces develop? One constraint is the need to insert implants into the brain, but recently, an interface was developed that can learn brain patterns without being implanted, and it seems that in the coming decade, we’ll see more of these interfaces and perhaps also miniaturized interfaces that can be carried around, making their use even more practical on a day-to-day basis.

Dr. Ido Magen

The link between the brain and computers is getting stronger thanks to developing technologies. Robotic hands typing. Illustration: Science photo library

Big technology, miniaturized electronics

If we had to choose a single product that best epitomizes the past decade, it would be the smartphone. After coming into our lives at the end of the previous decade, it became an essential part of modern life and completely changed the way we communicate and access information and services. Its development and improvement were possible thanks to the miniaturization of electronic chips. This technology enables us to integrate computer components, like processors and memory, but also a screen, a camera, and various sensors, into a hand-sized device.

Electronic miniaturization is measured by the size of the transistor component: At the beginning of the decade, the technology record was 32 nanometers (0.000000032 meters) and it is expected that during 2020, a 5-nanometer transistor will become available. Component miniaturization follows Moore’s Law, according to which the number of transistors on a microchip doubles every two years. And indeed, component density has multiplied by a factor of 40, which is what enabled the leap in the device’s computing power and capabilities. However, technology today is pushing the boundaries of physics; at 5 nanometers – the size of just a few dozen atoms – the chips are exposed to new phenomena that can impair their reliability. The challenges have led to testing technology alternatives that will allow continued performance improvement. One such alternative is the replacement of silicon, the main component in computer chips, with two-dimensional materials (sheets only a few atoms thick), which may enable increasing the density and provide mechanical flexibility. Another interesting direction are electric circuits based on biological molecules, like DNA, which could also help with further miniaturization.

Miniaturization of electronic components was very significant over the past decade and enabled the development of additional technologies, from smart watches to drones and autonomous vehicles, which will continue to influence our lives greatly in the next decade.

Dan Yudilevich

Miniaturization changed our lives. Smart phones and watches are just the tip of the iceberg. Photo: Shutterstock

Aiming for the stars: Discovering exoplanets

For many years, scientists have tackled the question of how many planets are there. Up until 1995, our sun was the only one we knew to have planets around it, and even afterwards, discoveries of planets in other solar systems were few and far between. The Kepler space telescope changed all of that. Launched in 2009, it was tasked with locating distant planets, by identifying the minor decline in stars’ light intensity when a planet moves in front of them and slightly reduces the amount of light reaching the telescope. The Kepler telescope enabled researchers to reveal more than 4,000 new planets, discoveries that proved, for the first time, that the galaxy is full of planets. Solar systems are not uncommon; in fact, the number of planets is comparable to the number of stars in the galaxy. The telescope also identified systems with planets similar to the Earth, and in some cases, even planets that are in the habitable zone, that is, in a distance from the star that does not preclude the presence of water on the planet. In recent years, planets were discovered around a variety of suns, some quite close to Earth, such as red dwarfs or even "dead" white dwarfs, as well as solar systems with multiple planets.

In the past decade, our beliefs about planets in our galaxy have changed. Today, we have a better grasp of how they form and how solar systems come to be, and we understand that Earth is not unique in our universe: Many planets in our galaxy may have liquid water in them. Do they also have life? These questions face several programs launched towards the end of the decade. The TESS space telescope, launched in 2018, will attempt to find planets around stars close to Earth, and the CHEOPS telescope recently launched will expand our knowledge of certain planets, including if they have atmospheres or other conditions to sustain life – at least life as we know it.

Guy Nir

Short National Geographic video on planets in other solar systems

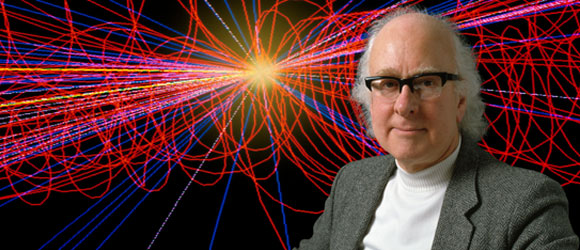

The last piece in the puzzle (for now): Discovering the Higgs particle

Over the past decades, physicists discovered more and more subatomic particles, and with time, they realized that all particles and forces acting among them, except for gravity, can be encapsulated by the combination of 17 elementary particles. The theory, which was named the Standard Model, explained all of the phenomena in the world inside atoms precisely, almost perfectly.

According to the model, particles of material cannot naturally have mass. However, in the 1960s, a mechanism for obtaining mass indirectly was offered: Interactions with a scalar field. The Higgs Field, named for one of the scientists who came up with the idea, is unique in that its value is not zero even in a state of rest, with no energy. Higgs Field exists in empty space, too, and the particles in the Standard Model “sense” it. The particles’ interaction with the field causes them to behave as if they have mass. There is no practical way to differentiate between interaction with the field and the mass of the particle itself, but the field can be found. If given enough energy, a Higgs particle can be formed in the field, which unlike the other particles, does have mass. In order to discover it, a very strong particle accelerator was needed, that would drive particles to very high energies.

Such is the Large Hadron Collider (LHC) in Switzerland, where groups of protons are accelerated to a speed close to that of light. When two groups of protons moving in opposite directions collide with each other, billions of particles are emitted. An array of detectors measures the amount of energy and the direction to which the particles were emitted. The search for the Higgs particle was particularly challenging, as the theory did not predict its mass. In 2012, the researchers isolated traces of the Higgs particle, and discovered that its mass is 125 billion electronvolt (133 times more than a proton). The discovery of the Higgs boson completed the Standard Model which summarizes our knowledge of the material and the forces that work inside it and led to the awarding of the 2013 Nobel Prize in Physics to Peter Higgs and Francois Englert.

The Standard Model describes nature well, but does not explain other physical phenomena, such as dark matter and neutrino mass (which is supposedly massless). These mysteries may be solved in the next decade.

Guy Nir

A prediction that took almost five decades to find supportive evidence. Physicist Peter Higgs. Photo: Science photo library

Biology in a new light: Innovative microscopic technologies

The light microscope has been used by biologists for hundreds of years, but over the past decade, the use of microscopy in biology research has considerably expanded. In the previous decade, the diffraction barrier – the ability to reach a resolution of less than 200 nanometers (one billionth of a meter) – was broken, with development of the super-resolution microscope. In the current decade, we have witnessed novel developments like “clarity,” a technique that renders intact biological tissues, even an entire mouse, transparent; and “expansion microscopy,” which provides super-resolution capabilities by expanding the distance between molecules.

Just before the beginning of the beginning of the present decade, another breakthrough was achieved – correlative light-electron microscopy (CLEM). The method combines live biological sample photography in a fluorescence microscope, before the cell fixation for photography in an electron microscope. The combination enables identifying cellular structures and biological molecules that would not be possible using the electron microscope alone and a nanometer-scale resolution not possible even with a super-resolution microscope. The method has improved during the current decade, and computational developments now enable creating a 3D picture of the cell in nanometric resolution.

The current decade has also been characterized by the development and growing application of innovative computing technologies, such as machine learning and neural networks, to create software that can process hundreds of thousands of microscopic pictures, search for patterns in them, and extract information that the human brain could never have found.

Dr. Gal Haimovich

New technologies shed light on the study of life. A light microscope in biological research. Photo: Science Photo Library

Life signs: Water in the solar system

Of the eight planets in our solar system, two are gas giants with multiple moons: Jupiter and Saturn. In recent years, their large moons became the focus of research on various interesting phenomena, such as liquid methane rivers and lakes on Titan, one of Saturn’s moons, or the tidal forces that create tectonic movement and volcanic activity on Io, Jupiter’s moon. Large underground oceans have been identified in three other moons – Ganymede, Europa, and Enceladus. As the three are relatively distant from the sun, their surface is completely frozen. But the gas giants’ gravity exerts tidal forces, which warm the moons’ interiors and produce heat that creates an underground ocean covered by a layer of ice, dozens of kilometers thick. Some of these oceans hold more water than all the oceans on Earth, combined.

Speculations about the existence of the oceans were validated in the last decade thanks to observations and measurements of vapor streams emitted from the surface of these moons, including those conducted by the Cassini spacecraft, which explored Saturn and its moons. Some of the findings indicated that the water contains organic matter, which could be evidence for the existence of microbial life.

In the upcoming decade, the launch of two research missions to Jupiter’s moons Europa and Ganymede is expected. European spacecraft JUICE and American spacecraft Europa Clipper will conduct measurements that will provide new information about these oceans and possibly about the chances of finding evidence for life on them.

Eran Vos

Is there life in the ocean under the frozen outer layer? The moon Enceladus in a picture taken by the Voyager spacecraft. Photo: NASA

Each one is unique: single-cell analysis

The cells in our body are not identical – a nerve cell is different from a skin cell, and both are dissimilar to gut cells. But over the past decade, we’ve also found that there are disparities among cells of the same type: Each cell behaves a bit differently from its neighbor. Microscopy technologies were the first to show this, but the emergence of precision technologies for genetic sequencing, which enable single-cell analysis, have led to the research field’s thriving in the current decade. A marked example is an article published in 2011, which demonstrated how a tumor develops through the DNA sequencing of 200 tumor cells.

Researchers today have the ability to sequence DNA and RNA in single cells in experiments that examine thousands of cells and more, and to identify epigenetic changes, i.e., those connected to activating or silencing genes using molecules that bond to them without changes to the DNA sequence itself. This enables to reveal differences between seemingly identical cells, discover new cell subtypes in a tissue that appears uniform, and even follow all stages of fetal development and learn about the changes taking place in each cell. This technology has facilitated the Human Cell Atlas, which maps the entire human body’s cells and their traits.

The success of single-cell genetic sequencing technologies has led the way, in the past few years, to attempts at proteomic analysis – that is, the identification of a single cell’s protein composition. These technologies, along with techniques for sample collection from single cells and analysis methods of minute amounts of protein, have set in motion the single-cell proteomic revolution, which may reach its peak in the next decade.

Dr. Gal Haimovich

Better understanding of each cell is the key to personalized medicine. Photo: Science photo library

Man in search for roots: New discoveries in human evolution

In the past decade, genetics has entered the field of human evolution and changed much of what we thought we knew. In May 2010, the Neanderthal genome was published, based on the DNA extracted from three bones of this hominid species. In that same year, researchers also sequenced the DNA from two teeth and a finger bone thought to belong to Neanderthals from the Denisova Cave in Siberia. The sequence was sufficiently different for these fossils to be considered as a separate species or subpopulation, the Denisovans. Later, an additional tooth and a jaw belonging to this species were found in a different region, Tibet, and identified using DNA or protein sequence.

The genetic findings revealed that our ancestors met the Neanderthals and the Denisovans after they had left Africa and had offspring with them. Today, all non-African humans have parts originating in the Neanderthals in their genomes, and some Asian populations also inherited Denisovan DNA.

New species in the human family have also emerged during the past ten years in the “conventional” way, i.e., through the discovery of fossils with unique traits. Australopithecus sediba, a species that had lived two million years ago, was found in 2010. Homo naledi, discovered in 2015, inhabited an area not a great distance from there, but more than 1.5 million years later. Last year, the discovery of an early hominin in the Philippines, Homo luzonensis, was announced; its classification is still being debated.

Over the past decade, there were quite a few new discoveries on the near history of our own species, Homo sapiens. The common explanation was that our species appeared some 200,000 years ago, until skulls that seemed almost modern but were actually 300,000 years old were found in Morocco. Evidence for Homo sapiens’ having left Africa for Israel almost 200 thousand years ago, apparently en route to southern Europe, was also uncovered. While some researchers are trying to determine where in Africa did our species develop, in part, by using genetic studies, others claim it did not evolve from a single source, but rather, from a variety of populations that mixed with each other.

Dr. Yonat Eshchar

A video from the American Museum of Natural History on human evolution

: